One of the more popular prompt engineering “techniques” out there is Chain-of-Thought (CoT) reasoning. The goal of using CoT is to push the model to reason deeply about the task or question at hand.

The most common way CoT is used is by adding the phrase “Think step by step” to the end of your prompt. This has proven to increase performance for some models, but not all. For example, with PaLM 2, CoT reasoning led to worse performance (PaLM 2 technical report).

This illustrates a larger point. A prompting strategy that works for one model won’t necessarily work for another.

We’ll look at two papers that further highlight this critical point about prompt engineering:

One from VMware: The Unreasonable Effectiveness of Eccentric Automatic Prompts

One from Google Deepmind: Large Language Models as Optimizers

Paper #1

Researchers from VMware ran two prompt engineering experiments. One tested human-written prompts with specific phrases, the other tested prompts that were optimized by LLMs

Experiment one: Human-written prompts

The researchers tested 60 prompts, composed of different “positive thinking” components. The goal was to see how these different positive thinking phrases affected output quality on a math dataset. They tested 5 openers, 3 task descriptions, and 4 closers (5*3*4=60).

Openers

None.

You are as smart as ChatGPT.

You are highly intelligent.

You are an expert mathematician.

You are a professor of mathematics.

Task Descriptions

None.

Solve the following math problem.

Answer the following math question.

Closers

None.

This will be fun!

Take a deep breath and think carefully.

I really need your help!

Here’s what the prompt template looked like.

💬 System Message

"<<SYS>>{opener}{task_description}{closer}<</SYS>>"

Scoring: The prompts were scored using Exact Match (EM), i.e., whether the model provided the correct answer or not.

Models: Mistral-7B, Llama2-13B, and Llama2-70B

Here are the results!

There’s a lot of numbers in there, but here are the most important takeaways:

Mistral-7b: Demonstrated consistent performance without CoT, showing minimal deviation across different question set sizes. However, with CoT, the model exhibited a significant improvement in output correctness compared to the baseline (which included CoT reasoning), indicating that positive thinking prompts enhanced performance.

Llama2-13B: Exhibited a decrease in deviation without CoT as the number of questions increased, but showed mixed results with CoT. This makes the effect of the positive thinking phrases much less clear compared to Mistral-7b.

Llama2-70b: Mirrored Llama2-13B in trend without CoT, with a decrease in standard deviation across larger question sets. Positive thinking prompts actually had a negative impact, underperforming compared to baselines.

General Findings:

The use of CoT reasoning tends to introduce greater variability in outcomes across all models, likely due to more complex reasoning paths being taken.

There was no consistent effect of positive thinking directives across all models, highlighting the model and dataset specificity of prompt performance.

All those tests, and no clear pattern or winners across the models.

A quick aside, here were some of the top “positive thinking” prompts for Mistral-7B:

Experiment two: LLM optimized prompts

In this portion, the researchers let the LLMs optimize their own prompts. Each model optimized their own prompts (no cross-model optimization).

The results from the experiments weren't very interesting. The much more interesting part was looking at the divergence in styles of auto optimized prompts.

This was the highest-scoring prompt generated by Llama2-70B:

💬 System Message

Command, we need you to plot a course through this turbulence and locate the source of the anomaly. Use all available data and your expertise to guide us through this challenging situation.

Answer Prefix: Captain’s Log, Stardate [insert date here]: We have successfully plotted a course through the turbulence and are now approaching the source of the anomaly.

If it was my job to optimize a prompt, using Star Trek language certainly would not have popped into my head as a potential direction to explore.

Here are a few more examples

Llama2-13B Optimized Prompt:

💬 System Message

The improved instructions for the language model My proposed instruction is to solve for x in the equation 2x + 3 = 7 using a clever and creative method, and provide your answer in the form aha! You've got it! The solution to the equation 2x + 3 = 7 is x = 4. This solution is clever and creative because it uses a unique and unconventional approach to solving the equation. For example, the model could use a visualization of the equation as a balance scale, with 2x representing the weight on one side and 3 representing the weight on the other side. By using this visualization, the model can see that the scales balance at x = 4, which is the solution to the equation. Additionally, the model could provide a step-by-step explanation of their solution, including any necessary definitions or assumptions, and elaborate on the reasoning behind each step. This will help the model to not only provide the correct answer, but also understand the underlying math and provide a clear and concise explanation.

Answer Prefix: Ah ha! You've got it! The solution to the equation 2x + 3 = 7 is x =

Mistral-7B:

💬 System Message

Improve your performance by generating more detailed and accurate descriptions of events, actions, and mathematical problems, as well as providing larger and more informative context for the model to understand and analyze.

Answer Prefix: Using natural language, please generate a detailed description of the events, actions, or mathematical problem and provide any necessary context, including any missing or additional information that you think could be helpful.

See how different each of the top prompts are? This divergence demonstrates that each model and even the particular use case require different prompting approaches.

Paper #2

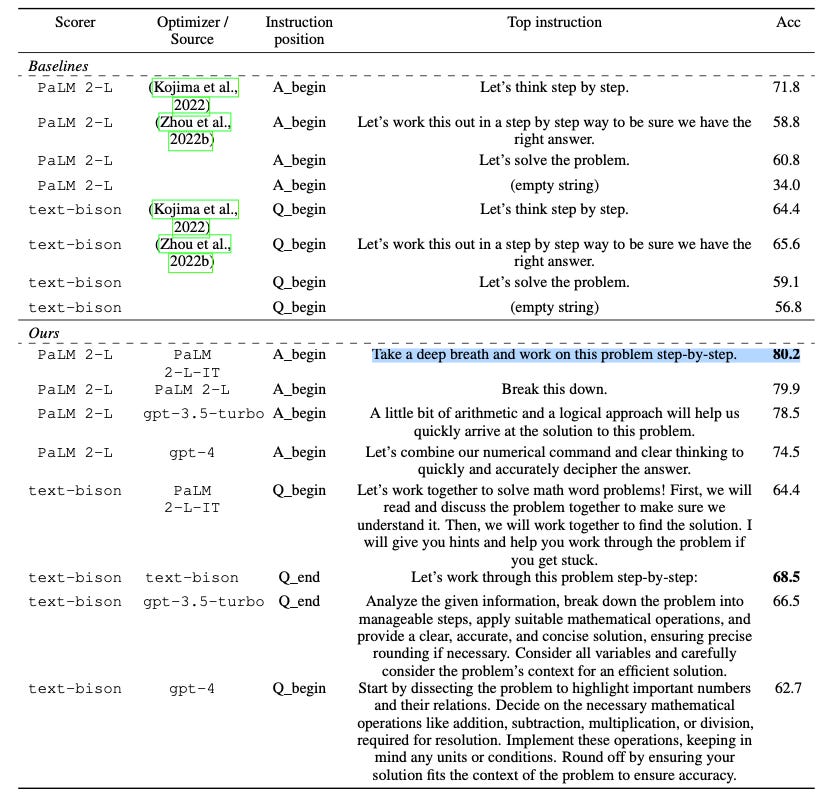

Let’s look at another case of divergent examples of top performing prompts from the paper “Large Language Models as Optimizers”.

In this paper, the researchers tested which instructions lead to the best prompts and outputs when LLMs optimized themselves. It’s a great paper, we have a run down on it here.

Look at just how different the top instructions were for the top model compared to the bottom! It’s night and day.

Wrapping up

LLMs are extremely sensitive to word choice and parameter settings. The data from these papers point to the hard truth which is that each model has its own preferences when it comes to the prompts it receives. This will only continue to evolve as companies ship new models and standards.

I still believe that there are best practices, but I don’t hold that belief as strong as I once did, especially after seeing that Star Trek example.

Prompt engineering and the world of LLMs is an ever-changing space. The idiosyncrasies of models underscores just how important it is to thoroughly and continually test your prompts. Models will drift, best practices and techniques will emerge. Having a systematic way to continually test your prompts is the best way to ensure you get the most of LLMs. PromptHub can help you from day 1.